Vaibhav Sahu

AI Engineer | Computational Science @ University of Pennsylvania

I am an AI Engineer with expertise in Machine Learning, Deep Learning, and High-Performance Computing. I am particularly interested in scientific machine learning. My experience spans LLMs and Transformers, NLP, and parallel computing. I am looking for opportunities in the Industry to get some valuable work experience and exposure. I also enjoy playing guitar, watching anime and playing video games.

Developed during Philly Codefest at Drexel University, Agents MD is an innovative solution to transform emergency room triage using multi-agent AI. The system leverages multiple specialized AI models working collaboratively to refine differential diagnoses in real-time, similar to how medical professionals work together. Key features include real-time speech-to-text transcription, AI-powered triage assessment, and a modern web interface. The project uses Python, Flask, OpenAI APIs, and AssemblyAI for speech processing.

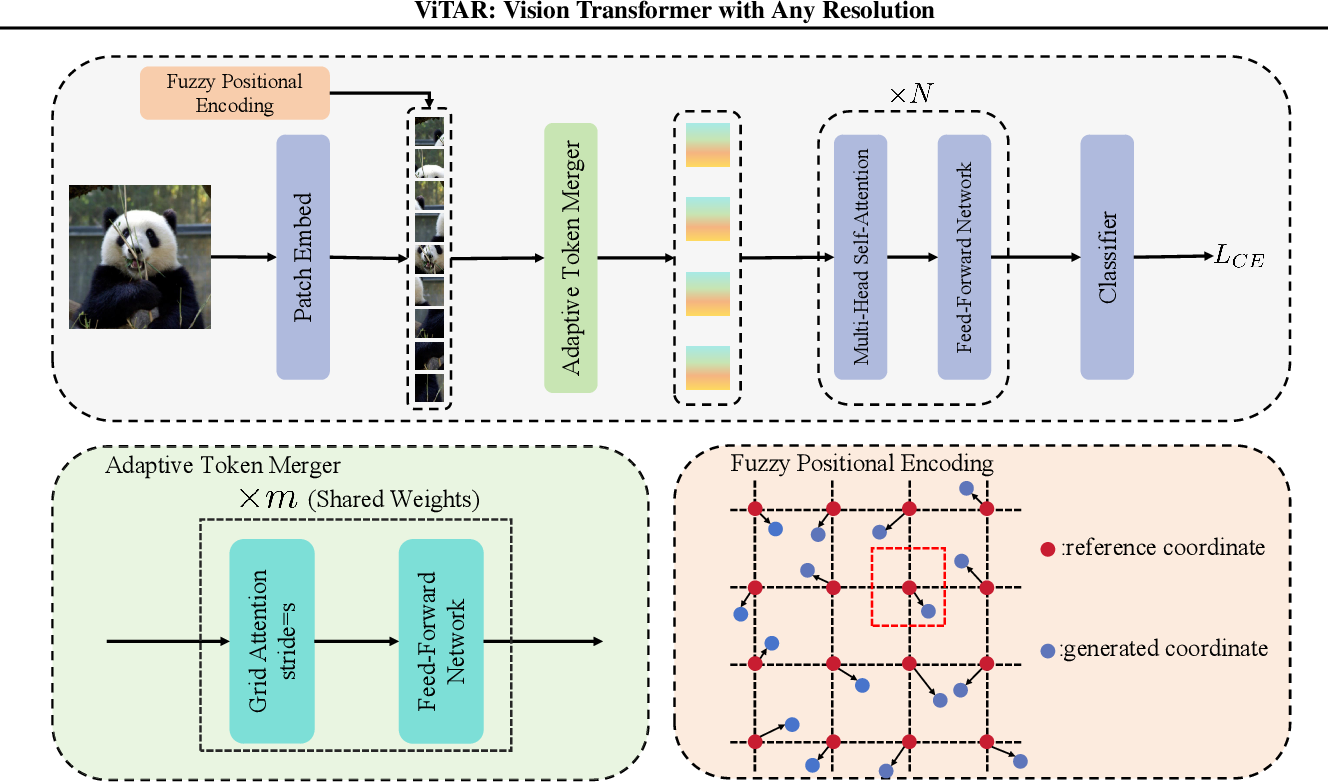

Implemented ViTAR, a novel Vision Transformer architecture that addresses the resolution scalability challenge in ViTs. The key innovations include a dynamic resolution adjustment module with a single Transformer block for efficient incremental token integration, and fuzzy positional encoding that provides consistent positional awareness across multiple resolutions. The implementation achieves impressive results: 83.3% top-1 accuracy at 1120x1120 resolution and 80.4% accuracy at 4032x4032 resolution, while reducing computational costs. The model also demonstrates strong performance in downstream tasks like instance and semantic segmentation, and can be easily combined with self-supervised learning techniques like Masked AutoEncoder.

Hangman is a very complex problem to tackle. Apart from having extensive knowledge of words, it also relies on being able to guess letters based on phonetics and rules around the sub-words of a language, albeit doing it within a specific number of tries. In this project, I trained a Google CANINE-s character level LLM from scratch on hangman states generated from 380k words and encoded as text for the model to learn. I was able to achieve an accuracy of 56% game-winning accuracy with 6 wrong guesses. I then further fine-tuned the model by making it play and learn from its own mistakes on the training data to achieve 63% accuracy with 6 tries and 86% accuracy with 10 tries. A demo can be found here.

Synthetic data machine learning involves using artificially generated data, created by algorithms, to train machine learning models. This approach can enhance privacy, expand datasets, and improve model robustness by simulating diverse scenarios not covered by real data. Generative AI algorithms can efficiently produce high-quality synthetic data that closely mimics real-world distributions, thereby enabling more effective and scalable training of machine learning models. The notebook can be found here.

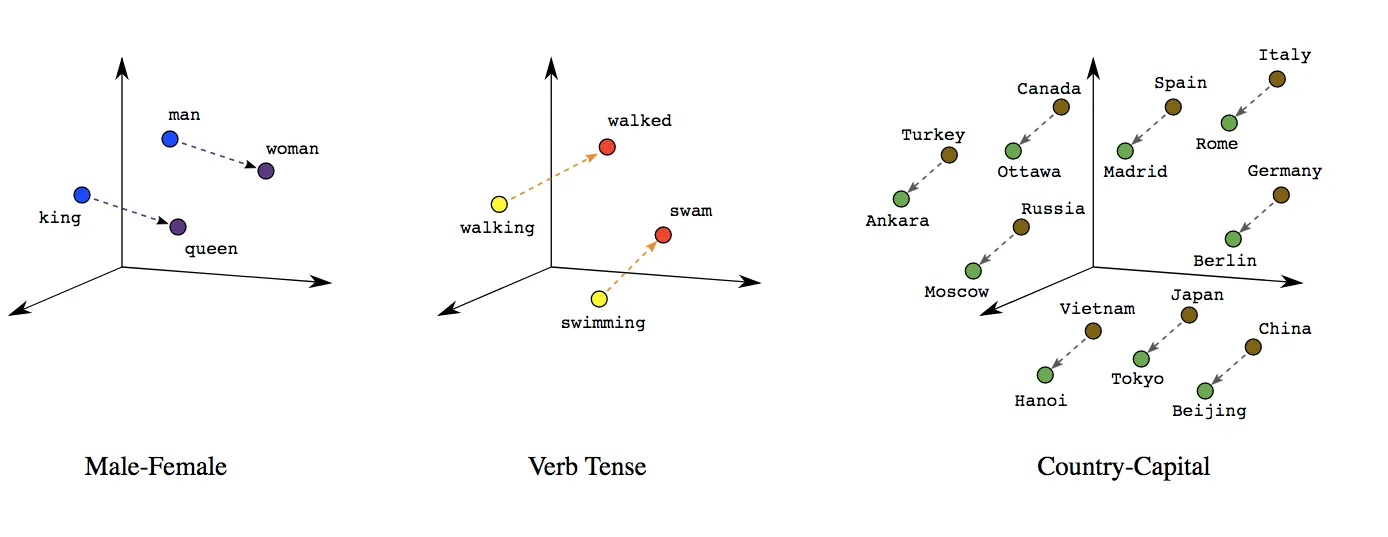

Word embeddings from Large Language Models can be used in several tasks, from sentiment analysis to textual style transfer and finding synonyms in other contexts. This project aimed to use BERT word embeddings to characterize the complexity and formality level of given words and documents. We do this using the implementation from Lyu et al. and expanding upon it by including anisotropy reduction through k-means clustering, fine-tuning BERT models to perform the same task, and using other similarity metrics to perform the task. Our results show that reducing anisotropy through k-means clustering improves performance. We also implement new similarity metrics to improve performance over cosine similarity for some features and fine-tune BERT models to perform the same task at the document level.

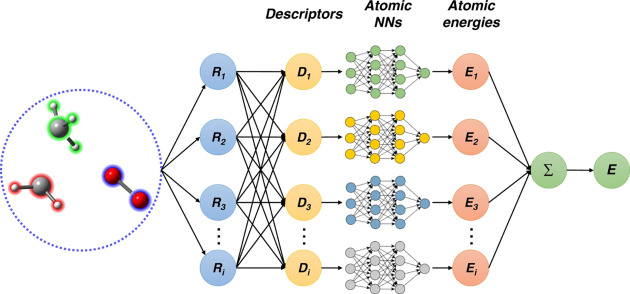

Machine Learning is a tool that has been rapidly gaining traction in the field of modeling Potentials for Molecular Modeling and Simulation. The early Neural Network Potentials, such as that by Behler-Parinello, went into great depth in feature designing to preserve the symmetry of the Potential Energy Surface (PES). We analyze a modern up-and-coming NNP framework called DeePMD, the energy landscape of these neural network potentials, and how well it predicts the melting behavior of copper systems.

AI has revolutionized the field of security, amongst many others. Facial Recognition is one of its most useful applications. However, amidst the pandemic, humans have become increasingly good at recognizing people with masks. Can AI do the same?

Monte Carlo simulations are among the oldest and most popular ways to determine probability distributions and perform integrals! Likewise, they are used to simulate processes in Statistical Physics. The Ising model is a mathematical model used in statistical physics to study the behavior of interacting spins in a system. A problem single flipping Metropolis algorithm faces is that it slows down tremendously near the critical point. Here's a neat way to solve this problem using the Wolff cluster algorithm.